TL;DR

Web caching involves storing copies of web files, like HTML pages or images, on a user’s device or intermediary servers. Employ efficient caching strategies like implementing caching policies, setting expiration times, and leveraging cache headers to minimize redundant data retrieval and reduce load times.

Of all the ways to improve website performance, caching is the most important one.

And everyone who works online should at least know what web caching is.

Web caching (also known as HTTP caching) can get pretty complex. But in this guide, we’re keeping things simple.

Here, you’ll learn (or revise) the fundamentals:

- How the web works;

- Why every web service needs a caching layer;

- How web caching works;

- Advantages and benefits of HTTP caching;

- Caching types;

- How to set up caching rules (caching headers);

- Developing a caching strategy;

- Choosing a caching solution/plugin.

All of this is accompanied by examples of web caching in action.

Let’s dig in.

Test NitroPack yourself

By submitting, you agree to our Privacy Policy.

How The Web Works

To understand caching, we need a quick refresher on how the web works.

A great place to start is your website and origin server.

So, what is your website?

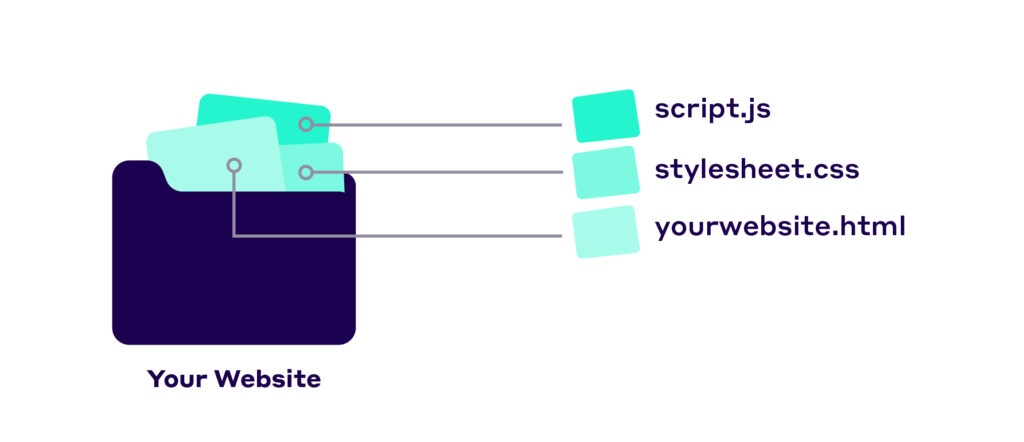

Well, it’s a collection of web pages that are grouped together.

You can also think of it as a collection of files – an HTML document, images, videos, CSS, and JavaScript files.

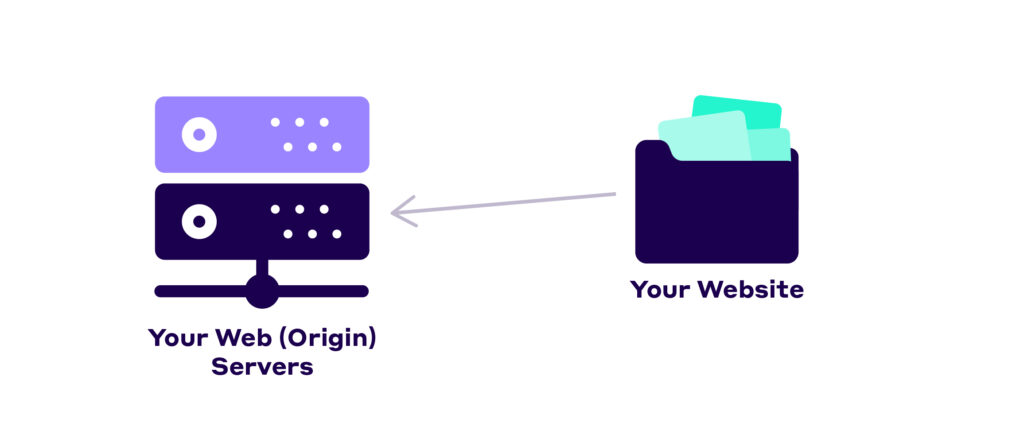

For people to access your website, it must be hosted on a machine connected to the web.

That machine is your origin (web) server. This server is typically one of many other servers provided by your hosting company.

In reality, many hosting companies rent these servers from Amazon (AWS), Microsoft (Azure), or Google (GCP). But that’s another story.

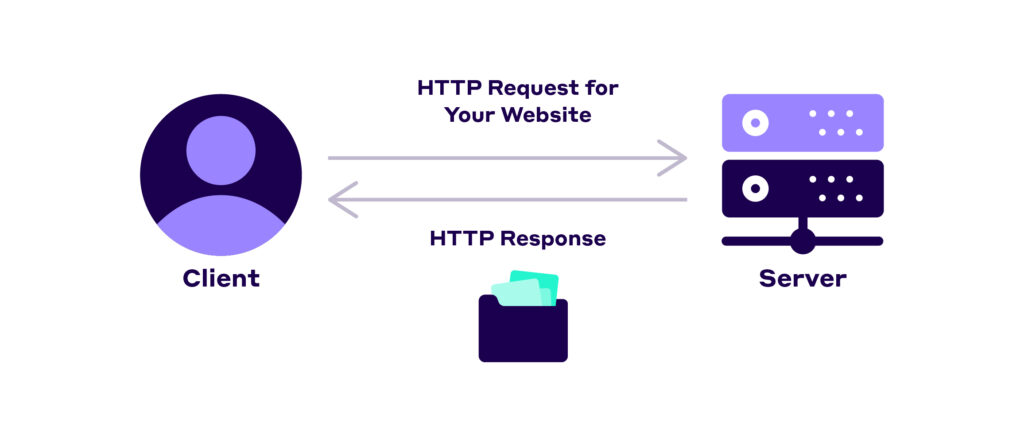

To access your website, visitors use their browsers (in this case called clients), which communicate with your server using HTTP requests and responses.

Now, there’s a whole process that goes on behind the scenes here.

In short, your browser does a DNS lookup that translates the domain name (e.g., yourwebsite.com) to an IP address (e.g., 63.46.23.184). Once the browser finds the address of the server (where the site lives on), it sends an HTTP request asking the server to send back a copy of the website

Here’s how a typical HTTP request looks:

Once your web server receives this request, it sends back an HTTP response.

Here’s the response to the request above:

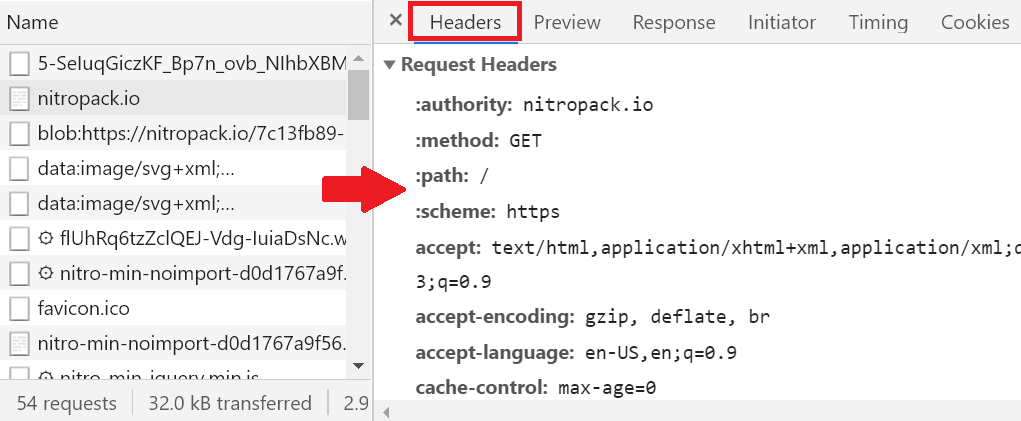

As you can see, both the client and the server use these things called headers. HTTP headers allow them to pass additional information when communicating.

Headers are the main tool for controlling your caching policy.

You can use them to set specific rules, like how long the response sent by the server should be cached for.

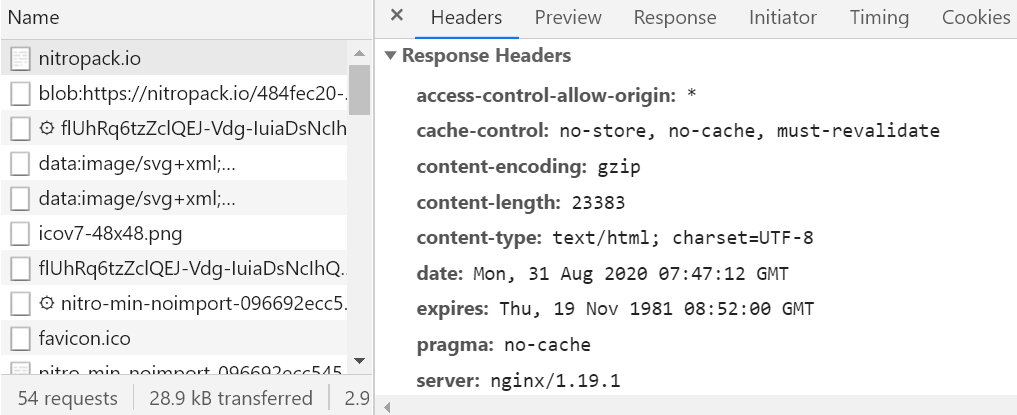

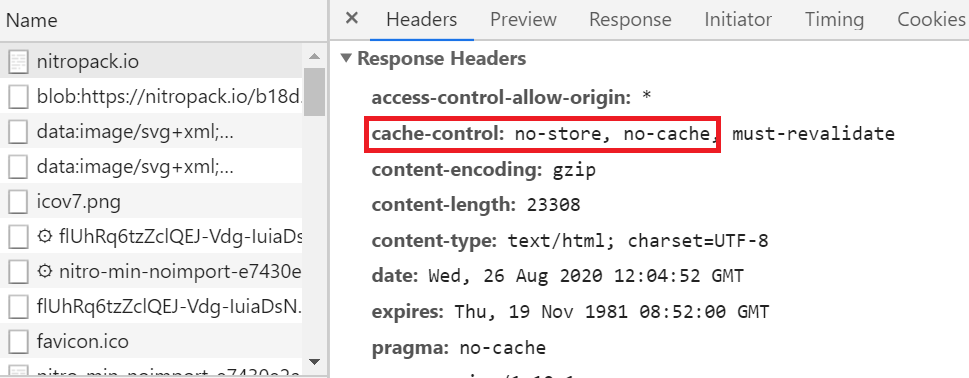

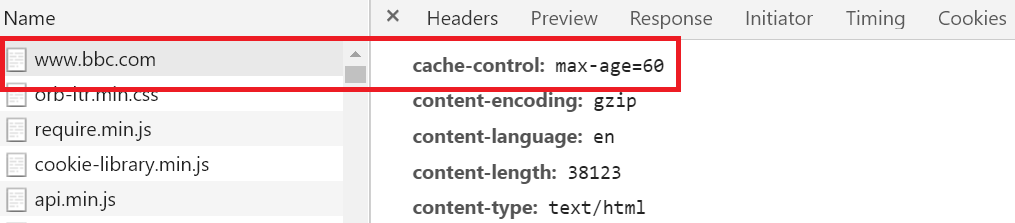

Here’s a typical caching header:

The cache-control: header is pretty important when it comes to caching. We’ll go over it in detail later in this guide.

For now, let’s go back to your website and server.

Why Every Web Service Needs a Caching Layer

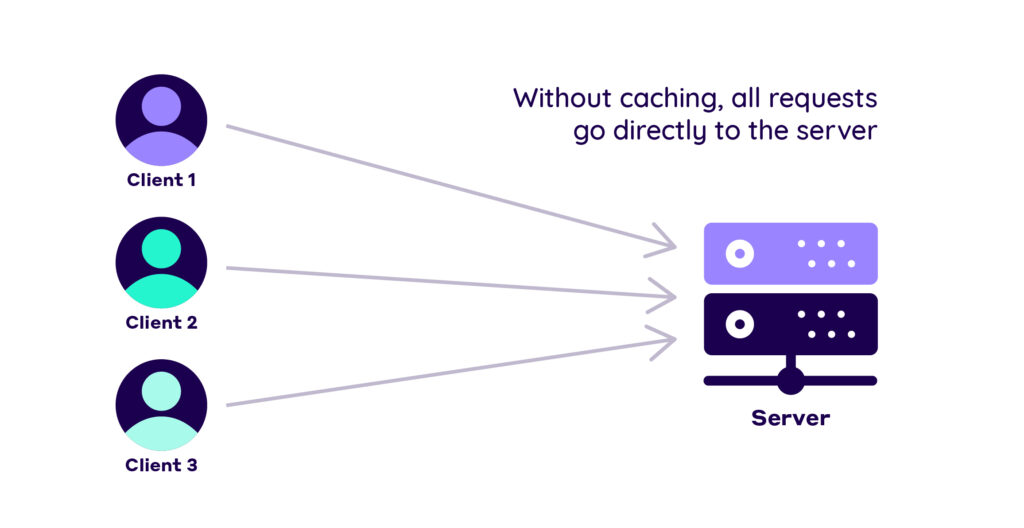

So, your website exists on your origin server.

Clients make requests when they want to browse your website. Your server responds and everyone’s happy.

Without caching, this model still works, but it has huge drawbacks.

First, your origin server has to handle all incoming requests.

That might be alright for 10 requests, but it’s not for 10 000.

Each server has a limit on how many requests it can handle simultaneously (this varies based on the server’s specs). Every request after that limit goes into a queue, resulting in longer loading times for the clients that end up in the queue.

Second, repeat visitors have to re-download the same files every time. That’s a massive waste of bandwidth.

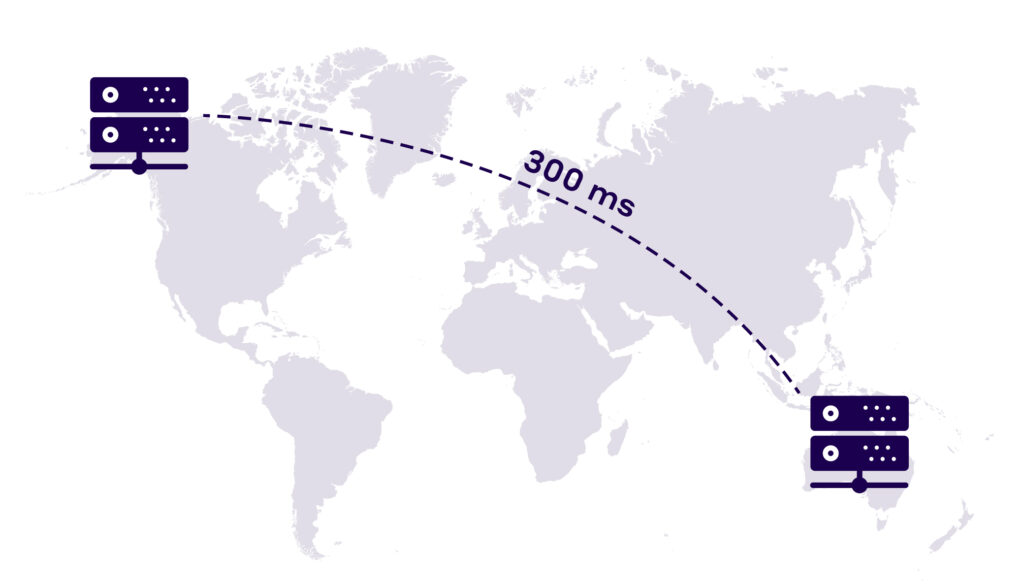

Third, your web server is probably pretty far away from most visitors.

For example, suppose your web server is in a data center in Ohio. In that case, data has to travel a huge distance to reach users who are far away, for example, in Europe, Africa, or Asia.

This increases the latency and makes your website slower than it should be.

Finally, with only one server or even a collection of servers in the same data center, you’re taking a massive risk.

One small outage and you’re looking at serious downtime. And since outages happen all the time, this is a risk you want to avoid at all costs.

Luckily for us, web caching solves all of these issues.

Here’s how.

How Web Caching Works

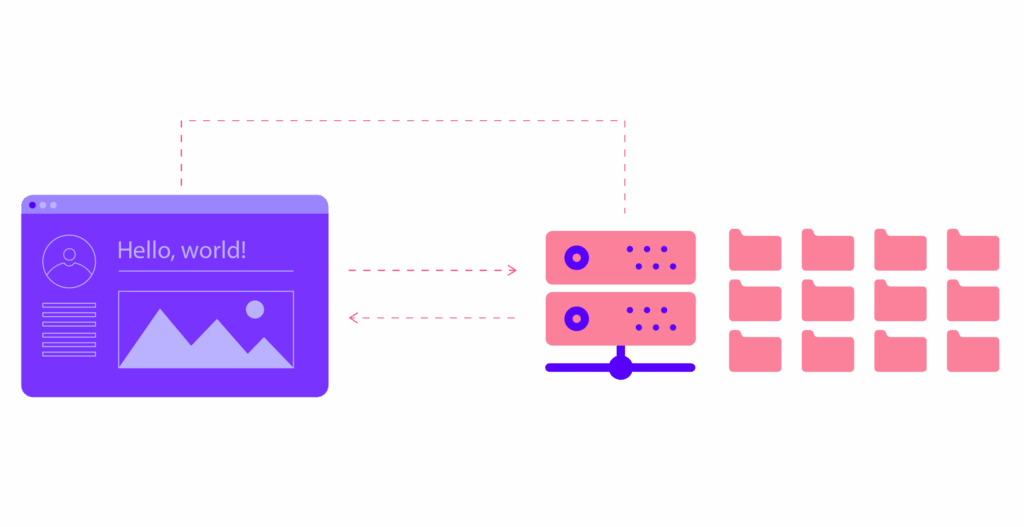

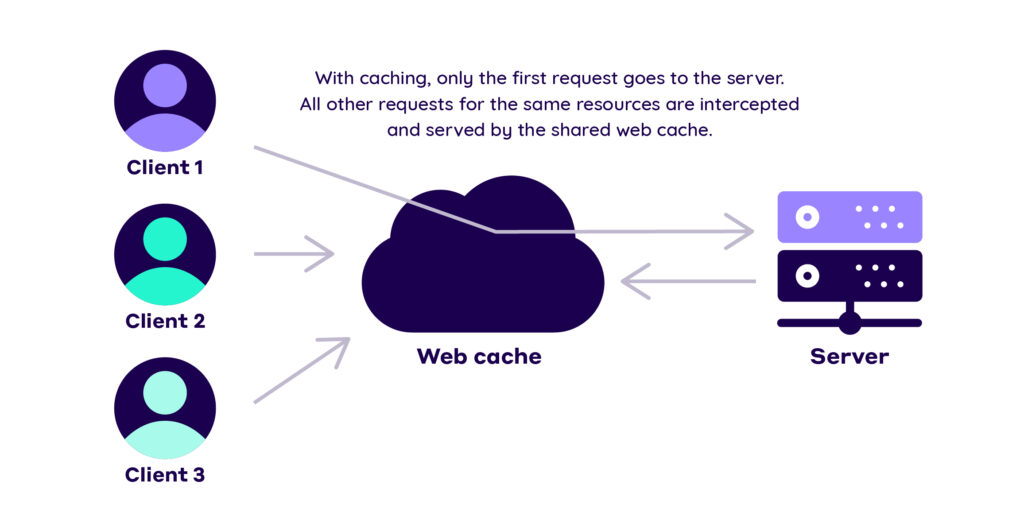

The essence of web caching is this:

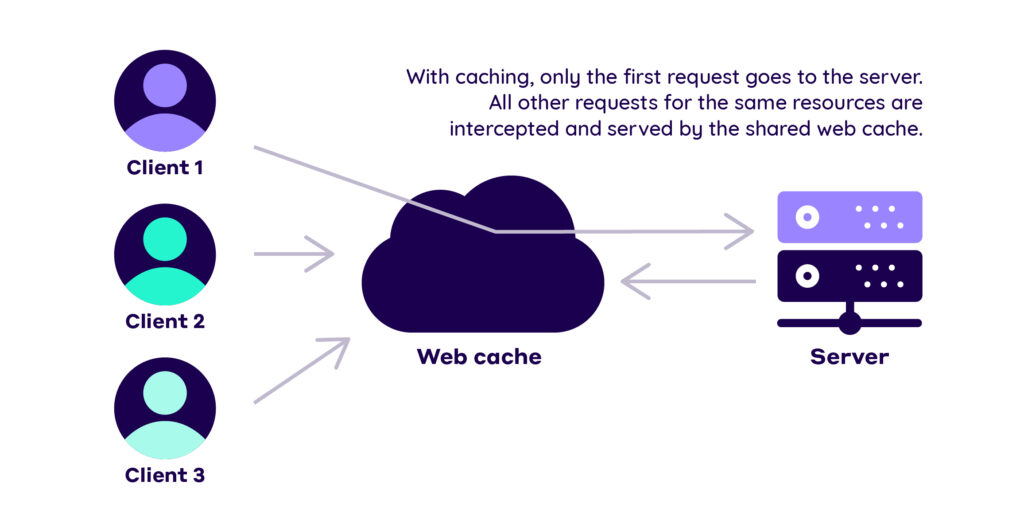

You store a copy of your website’s resources in a different place called a web cache.

Web caches are one of the many intermediaries on the web. Their job is to sit between the origin server and the user and save HTTP responses – HTML documents, images, CSS files, etc.

These saved responses are called representations since they only represent your original website at a specific point in time.

Web caches can track requests for these resources and serve them to clients.

That way, the request doesn’t have to go all the way to the origin server.

When the request is retrieved from the cache, we have a cache hit.

On the flip side, if the cache can’t fulfill the request, we have a cache miss.

In most cases, you want to have a high cache hit ratio, i.e., most HTTP requests are successfully served from the cache.

So, how does caching solve the issues we discussed above?

Advantages And Benefits of HTTP Caching

First, your web server doesn’t have to handle each HTTP request.

In fact, most requests won’t even reach the origin server. This reduces its load and saves it from getting overwhelmed.

Second, repeat visitors don’t need to re-download the same resource every time.

A ton of your website’s resources can be cached for long periods of time, for example:

- Logos;

- Navigation images;

- Downloadable content;

- Media files.

Once cached, they don’t need to be downloaded again every time.

Of course, there are some items that you might want to avoid caching:

- Frequently modified JavaScript and CSS;

- Assets containing personal data (phone numbers, banking info, etc.);

- Content that’s shown to logged-in users;

- Cart pages.

Even with these exceptions, there are still many resources that are perfectly suitable for caching.

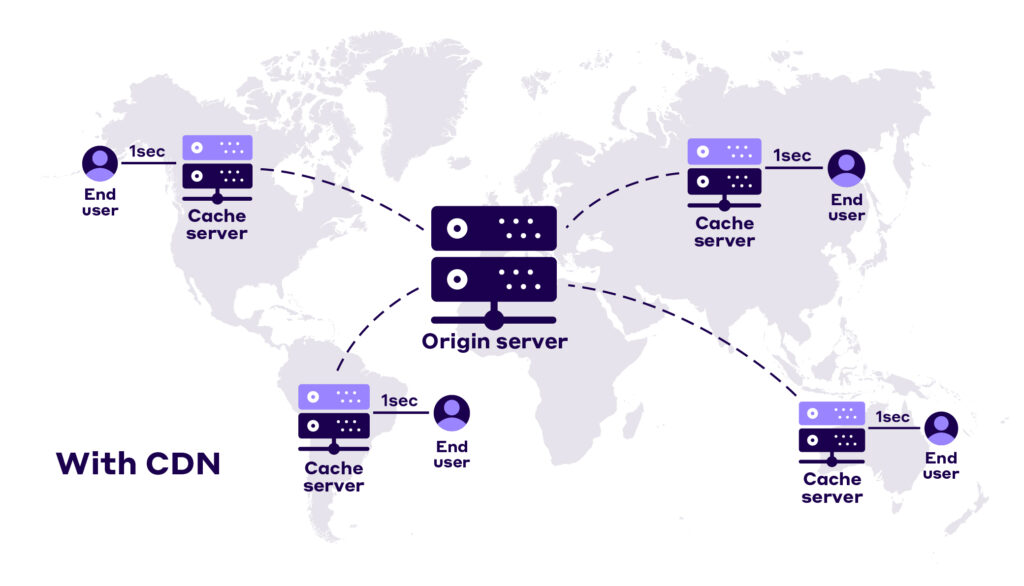

Third, web caches exist all over the world.

You can save copies of your website in locations close to your users. That way, the data doesn’t have to travel all over the world. In essence, this is what CDN providers do.

We’ll talk about CDN caching in a bit.

Finally, storing copies of your website’s files in different locations reduces some of the risks of downtime.

While caching isn’t the only way to mitigate this risk, it certainly plays a vital role.

Now, let’s talk about the different types of web caching.

Caching Types

Based on different criteria, you can find tons of caching classifications:

Public, private, client-side, server-side, page caching, opcode and object caching, and more.

But for now, you need to understand the two crucial distinctions when it comes to web caching:

- Browser caching;

Proxy server caching.

We’ll also touch on private and public caches. They’re essential to the concept of storing responses in different places and for different purposes.

Let’s get started.

Browser Caches

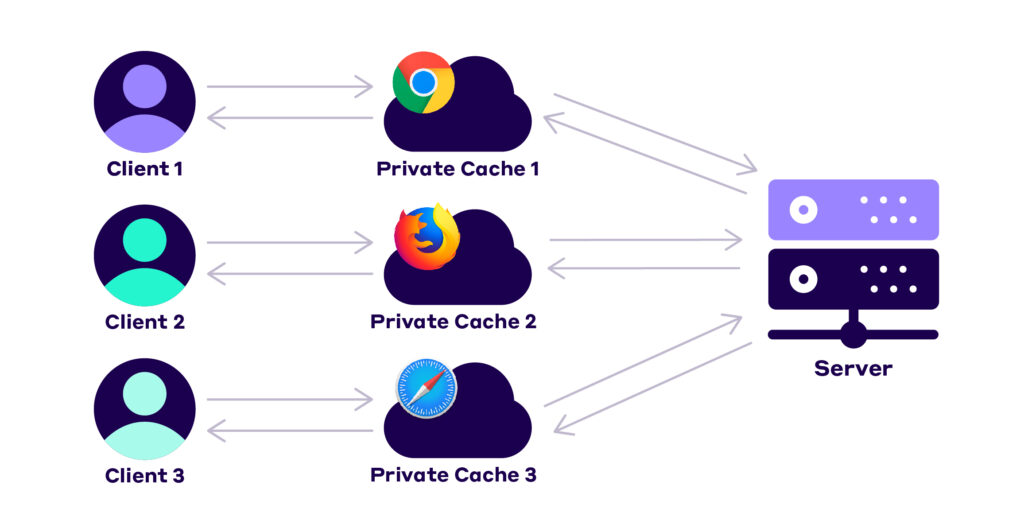

The browser you use maintains a small cache just for you.

Each browser has its own caching policy that determines which resources it saves.

This may be content that’s specific to you as a user or other resources that are likely to be downloaded again.

Browser caches are incredibly useful. Since the information is stored on your computer, it loads instantly when you request it. That’s the main reason why your browser’s back and forward buttons can work their magic.

Because the saved resources are dedicated only to you, browser caches are a type of private cache.

In short, browser caches are awesome.

Unfortunately, we can’t rely on them for everything.

Here’s why:

Browser caching is fairly simple and limited. And because browsers set the caching policy, you don’t have enough control over the process.

Also, these caches only serve content to one user. While useful for some resources, this approach lacks flexibility.

That’s why we need proxy server caches.

Proxy Server Caches

Proxy servers are distributed all over the world.

They’re maintained by 3rd party organizations like ISPs and CDN providers.

Now, proxy servers are a long and complex topic.

For the sake of simplicity, I’m glossing over some of the details here. For example, I’m omitting the technical differences between proxies, gateways, and reverse proxies. If you want a deep dive on the subject, check out this article on proxy servers.

Now, back to the matter at hand.

Proxies act as intermediaries between the client and the origin server. You can use them to cache content in different locations.

That way, your content is closer to the users, reducing latency and network traffic:

Also, proxy caches can serve content to multiple users, i.e., they can be used as shared caches.

The example describing how caching works in the previous section is also an example of a shared cache:

And since the proxy is a server (just like the origin server), you can configure the caching policy. This makes proxy caches much more flexible than browser caches.

But there’s still one problem:

Setting up and monitoring proxy servers is hard. You need a lot of technical skills, not to mention time and resources.

That’s why everyone uses a CDN provider.

CDNs (Content Delivery Networks) are networks of servers spread out across the globe. CDN providers let you use that network through an admin panel, making it easy to configure and monitor tons of proxies all over the world.

Some of the most popular CDN providers are Cloudflare, Akamai, and Rackspace.

How To Set Up Caching Rules

As a website owner, you can manage your caching policy through the HTTP headers we talked about.

Response headers in particular control most of the caching process.

You can modify these headers through your web server’s configuration.

If you aren’t sure how your web server works, here are links to the most popular web server caching configurations:

Once you go through the documentation, you’ll find that a lot of the heavy lifting has been done for you. Especially if you use a CMS like WordPress.

Still, it’s good to have at least a general understanding of how your website’s caching policy works.

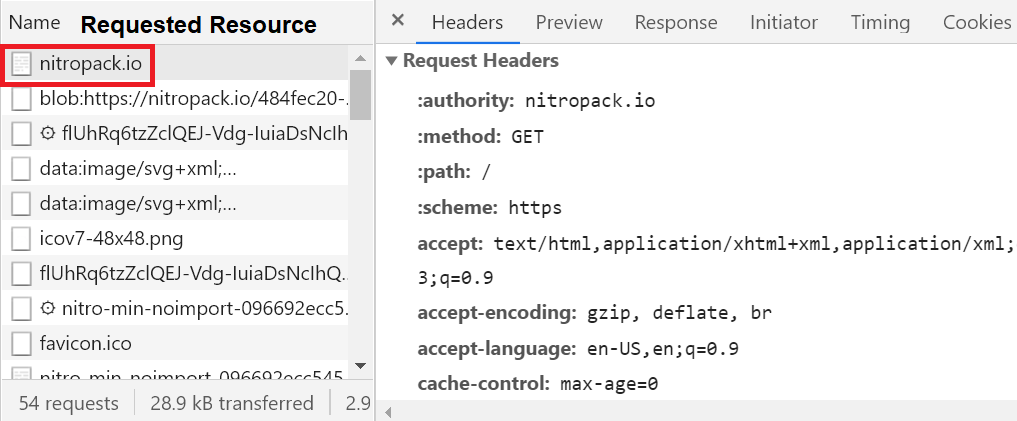

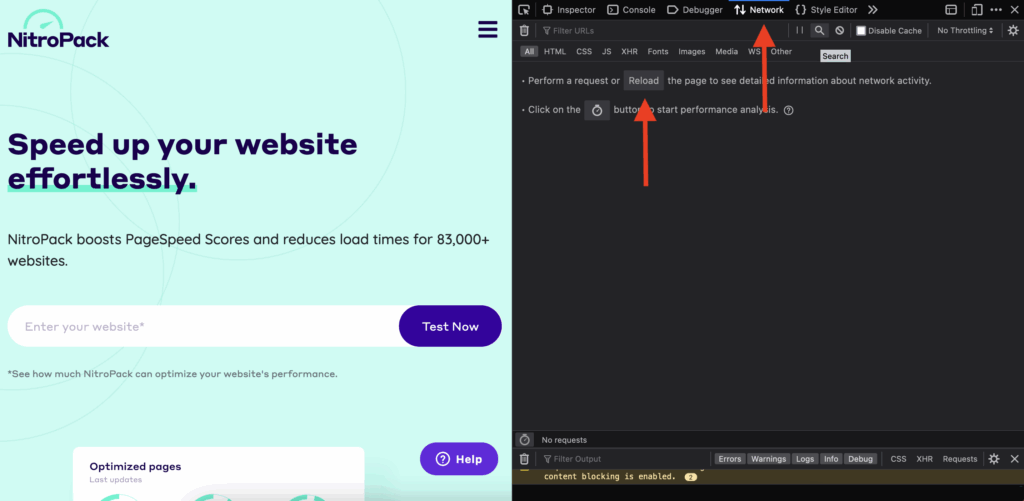

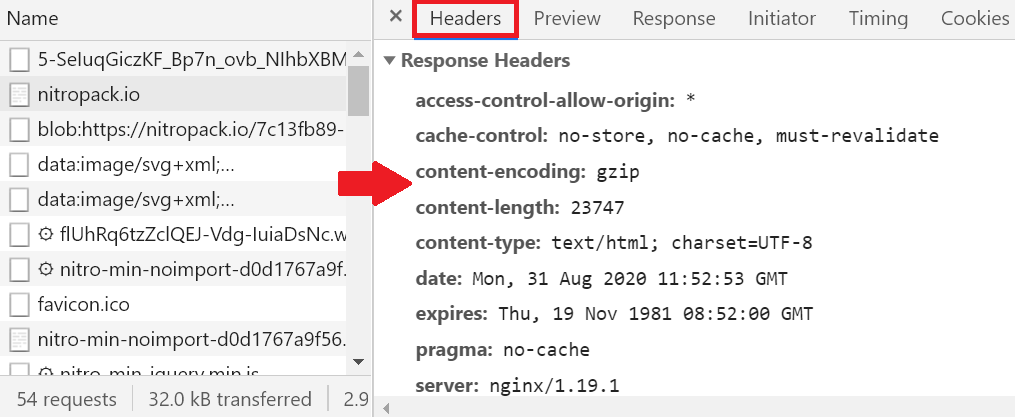

You can start by analyzing the request and response headers with Chrome’s DevTools.

Open your website, right-click and select “Inspect”.

From there, go to “Network” and refresh the page.

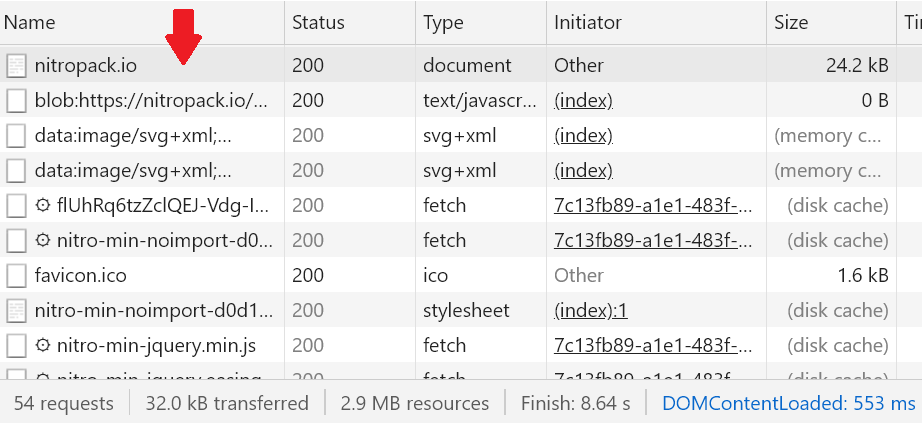

Once you do that, the browser will record the network activity.

From there, you can click on a resource to see the HTTP request-response model in action.

If you scroll down, you’ll find the request

and the response headers.

Now, there are tons of headers you can find out there.

But when it comes to caching, you absolutely must know how the expires and the cache-control headers work.

Let’s start with the easier one.

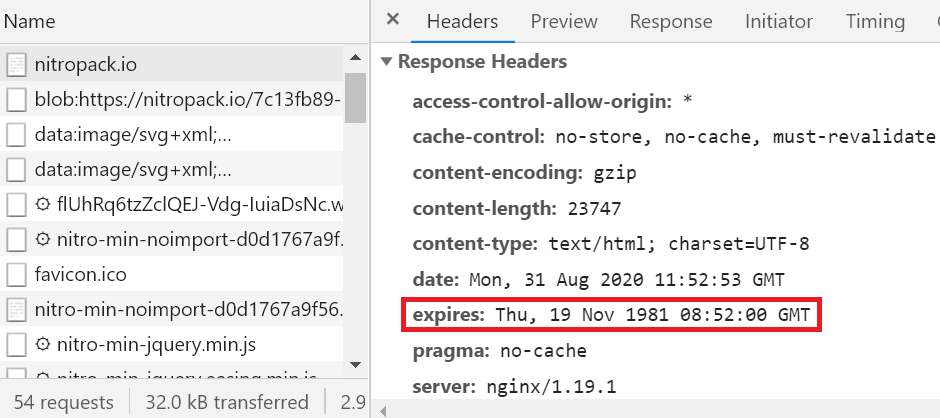

Expires Header

The expires header is pretty straightforward.

It tells the cache for how long the representation (or response) should be considered fresh.

Here’s how it looks in a real HTTP request:

As you can see, this header contains a date. Nothing more.

This makes it extremely easy to work with. However, the expires header lacks a lot of flexibility compared to the cache-control method. That’s why you shouldn’t rely on it too much.

Nowadays, the expires header is mostly used as a fallback to the cache-control header.

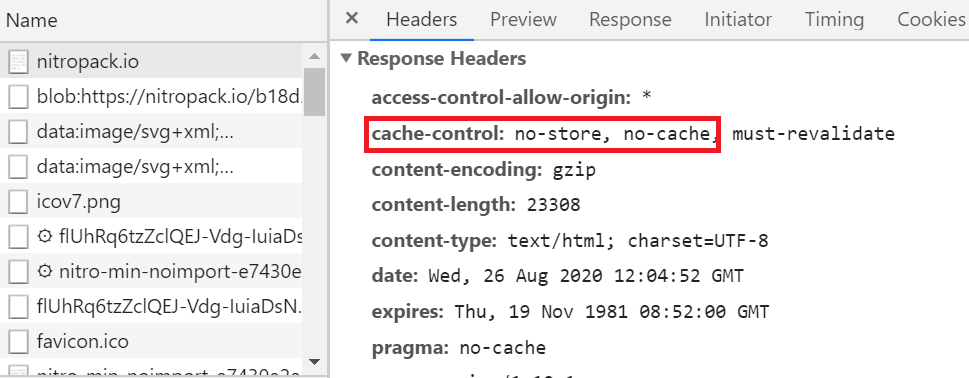

Cache-Control Header

The cache-control header was created to address the limitations of the expires header.

And it does just that.

It’s much more flexible and practical than its predecessor. At the same time, it’s a bit more complex to use.

Here’s why:

You can use this header to set a ton of different rules. Unlike the previous one, cache-control can work with multiple options and values, like:

- no-store instructs web caches not to store any version of the resource under any circumstances;

- no-cache tells the web cache that it must validate the cached content with the origin server before serving it to users. We’ll talk about validation and freshness in a bit;

- max-age sets the maximum amount of time that the cache can keep the saved resource before re-downloading it or revalidating it with the origin server. It takes its value in seconds. For example, cache-control: max-age= 31536000 (which is the max value) tells the web cache that the resource must be considered fresh for one year from the time of the request. After that, the content is marked as stale;

- s-maxage does the exact same thing as max-age but only for proxy caches;

- private tells the web caches that only private caches can store the response;

- public marks the response as public. Any intermediate caches can store responses marked with this instruction;

- must-revalidate tells the cache to strictly obey the freshness information you provide. For example, if your set cache-control: max-age= 31536000, must-revalidate the web cache can’t serve the stale content under any circumstances;

- proxy-revalidate the same instruction, but only for proxies.

As you can see, cache-control offers a lot of functionality. That’s why nowadays it’s the go-to caching header.

Now, before getting into the specific strategy tips, we need to cover two more vital concepts.

Without them, you might as well discard caching altogether.

I’m talking about freshness and validation.

Cache Freshness and Validation

Imagine a news website that covers global events 24/7, for example, BBC.com.

The website is updated constantly throughout the day. The people who manage it probably don’t want to set long expiry times in their caching policy.

In fact, the HTTP response to the request for their homepage is set to expire every 60 seconds:

So, what happens during and after those 60 seconds?

Well, during this timeframe, the cached representation is considered fresh. The web cache can serve it without contacting the origin server (unless there’s a no-cache rule in place).

Now, after the minute is up, the content is marked as stale. In most cases, caches can’t serve stale content.

In general, the web cache has to contact the origin server and re-download the response.

But there’s a problem with this process:

You don’t want to re-download resources that haven’t changed since the last time they were saved in the web cache.

That’s unnecessary overhead for your origin servers. Not to mention a waste of time and bandwidth.

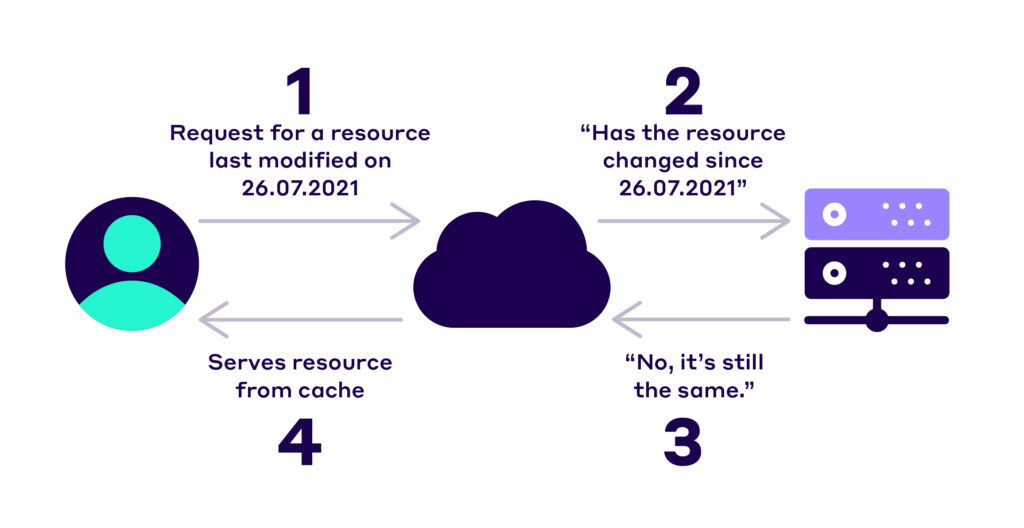

This is where validation comes in.

Validation allows the cache to ask the web server if the expired cached response can still be served to visitors.

If the server returns a positive response, the cache can serve its resource, instead of re-downloading it.

And that’s the beauty of validation:

It allows us to serve up-to-date content while still keeping all the benefits that come with caching.

So, how can we set this up?

Well, we put validators in our good ol’ HTTP headers.

There are two main validation methods you can use:

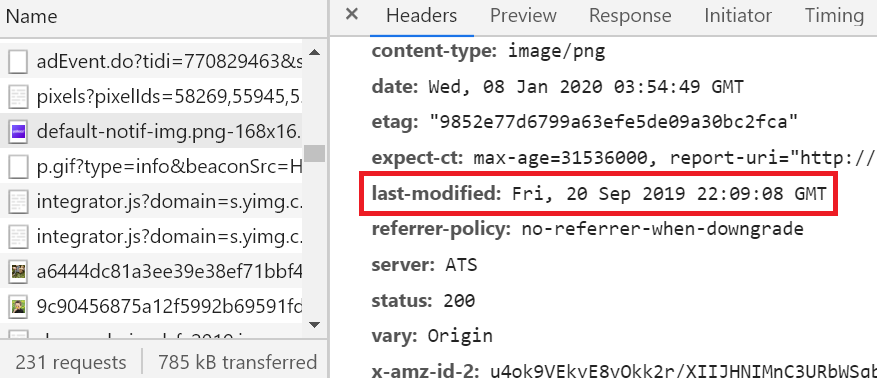

- The last-modified and if-modified-since headers

- Etag and the if-none-match header

Most modern web servers use both validation methods automatically for static resources.

Using the last-modified and if-modified-since headers

First, you can include the last-modified validator in the initial response to the web cache like this:

Once the web cache has this data, it can ask the web server if the original content has been updated since then.

This is done with the if-modified-since header in the next request.

If the resource hasn’t been modified, the web server confirms that the stale representation can be served.

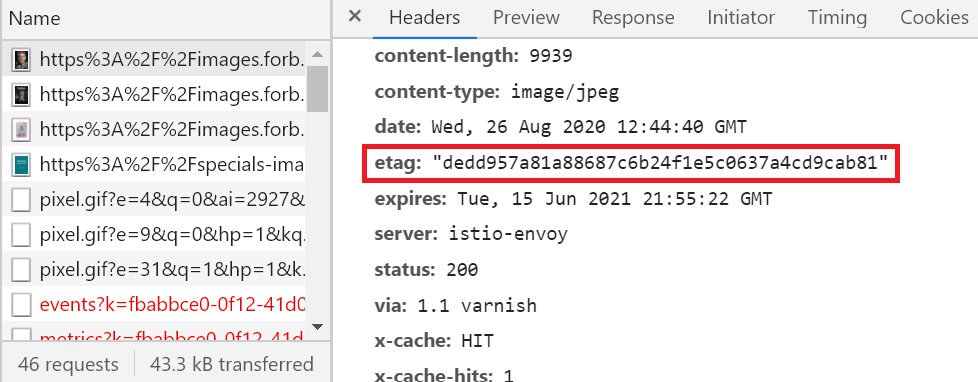

Using the etag validator

The etag works in a similar way to the previous method.

Here, the server creates an etag for the resource when it initially serves it to the cache.

This tag is a unique identifier for the cached resource. It looks like this:

After saving the response, the web cache can send an if-none-match header with future requests to see if this etag has changed.

If the origin server returns the same etag, the representation hasn’t changed and vice versa.

You’ve now covered the most important concepts in web caching.

Quick Tips For Creating A Caching Strategy

From here, it’s about creating and executing a strategy that’s tailored to your website.

This process will look different for everyone.

But there are a few general tips that almost all websites should implement:

First, get familiar with your web server and CDN provider’s caching policies.

A lot of the stuff we talked about might already be taken care of for you.

Second, use the same URL every time you refer to the same content.

If you have identical content on different pages on your website and serve it to different users, it should have the same URL.

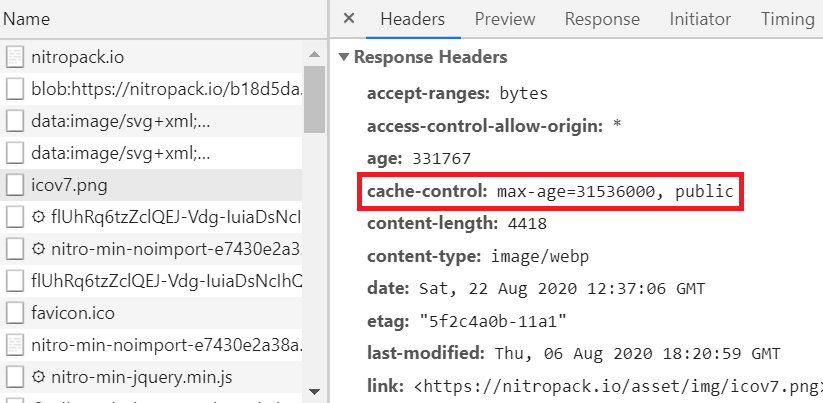

Next, use cache control: max-age with a large value for static assets that don’t change often. For example, your logo, nav icons, media files, and downloadable content.

Also, don’t rely too much on the expires header. In most cases, cache control is far superior.

Always include a validator – last-modified or etag. It’s a great way to ensure the cached resources’ freshness without sacrificing the benefits of caching.

Not to mention that some web caches refuse to cache content that doesn’t have an age-controlling header and a validator.

It’s also useful to create and use a dedicated directory for images. This makes it much easier to refer to them from different places.

You can get much more advanced, but for now, these tips should be enough to get you on the right track.

For an even deeper dive into this topic, check out Mark Nottingam’s Caching Tutorial.

Choosing A Web Caching Tool

After all that technical jargon, I finally have some good news:

You don’t have to set up all these caching headers by yourself. As I already said, some of the heavy lifting is done for you.

Especially if you’re running your website on WordPress or any other popular platform.

But here’s the catch:

You probably still need a separate caching solution/service/plugin to take care of the advanced stuff for you.

And there are TONS of caching tools out there.

A quick search in WordPress’ plugin marketplace returns over 51 pages of results.

And that’s just for WordPress websites.

A quick Google search for “caching tools” returns about 35,000,000 results.

In short, you have options.

Here are a few things to look for when choosing which service to go with:

First, make sure your caching solution automatically detects changes on your website.

After a change, your caching tool should immediately invalidate the saved response and start updating the cache. All of this should happen automatically and in the background while your website is running.

At NitroPack, we call these features Cache Warmup and Cache Invalidation.

Next, it’s best if your caching tool comes with a built-in CDN.

Some caching solutions allow you to link your CDN with their service. That’s all good, but it can be time-consuming and inconvenient.

In a perfect situation, you’d want to get a great caching tool and a CDN in one swing. Avoid manually syncing both if you can.

Finally, your caching service must provide device-aware caching.

The present and the future are both mobile. And your caching policy needs to reflect that.

Put simply, your caching solution must create dedicated cache files for mobile, tablet, and desktop devices. End of story.

Keep these tips in mind while research caching options.

Everything else really depends on your current setup, needs, and budget.